Apache Spark

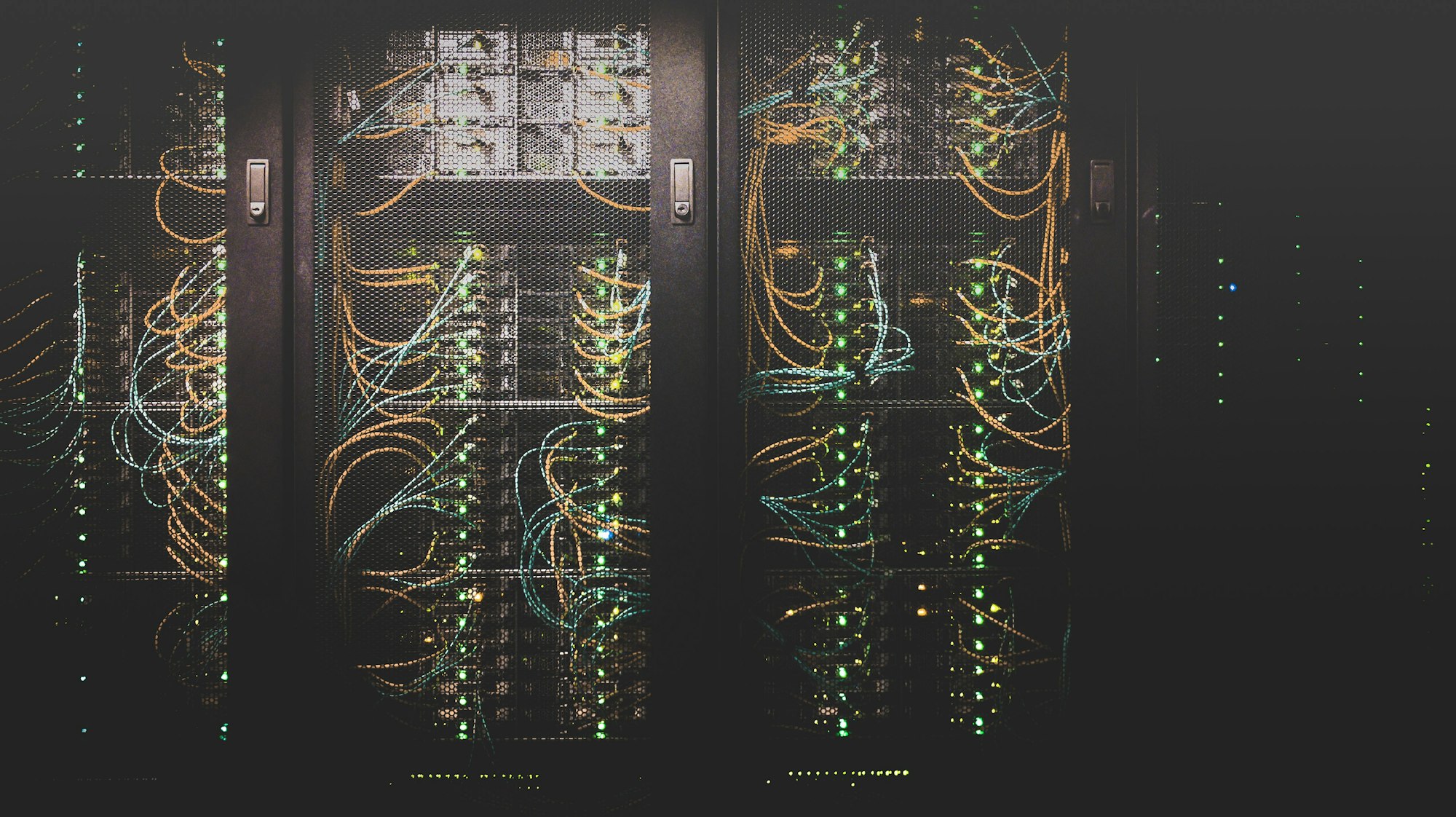

Lightning-fast cluster computing. Process massive datasets at scale with our Apache Spark engineering expertise.

Get Started Today

Platform Overview

Apache Spark™ is a multi-language engine for executing data engineering, data science, and machine learning on single-node machines or clusters. It provides high-level APIs in Java, Scala, Python, and R, and an optimized engine that supports general execution graphs. It also supports a rich set of higher-level tools including Spark SQL for SQL and structured data processing, pandas API on Spark for pandas workloads, MLlib for machine learning, GraphX for graph processing, and Structured Streaming for incremental computation and stream processing.

The Enterprise-Grade AI Tools That Power Results

We optimize Spark clusters for maximum performance using Spark SQL, Structured Streaming, and MLlib for distributed machine learning.

Launch Faster and SmarterYour Proven Path to AI Supremacy:

From Vision to Victory

Strategic cluster configuration and optimization for large-scale data workloads.

Assess Requirement

Identify workloads, scalability needs, and business objectives.

Platform Selection

Choose the right cloud platforms based on performance, cost, and use case.

Architecture Design

Design systems optimized for the selected cloud environment.

Migration & Deployment

Migrate applications and deploy systems on cloud platforms.

Integration & Optimization

Ensure seamless integration and optimize performance and cost.

Optimize & Manage

Continuously monitor usage and scale infrastructure as needed.

Why Visionary Leaders Choose ETY to Crush the Competition

We specialize in fine-tuning Spark performance to reduce execution time and cloud compute costs.

Let's Build AI That WinsReal-time Streaming

Implement low-latency data processing pipelines that react to events as they happen.

Distributed Intelligence

Scale your machine learning models to petabytes of data using Spark's distributed processing power.